To see why the wireless earbuds are a problem for audiophiles, we need to begin at the opposite end of the process, when analog signal (music) becomes a digital representation.

There are two steps in the process: first, the continuous analog signal is sliced in time, "sampled," so that it's now represented by a sequence of analog levels; second, those analog levels are compared with a finite scale, the digital scale, and the best approximation is used to represent the level, thusly:

There are two sources of information loss (or "noise") in this process:

1. By taking level slices of a continuous curve, the sampling creates an imperfect representation of the curve; that's called sampling noise. The longer the slices, that is the less often the analog input is sampled, the higher this sampling noise.

2. By forcing the analog samples, which are on a continuous scale, to match the limited levels of a digital scale, the process creates a second type of noise, quantization noise. In the above example, the difference between the digital output for periods (1) and (2) is higher than the difference between the analog samples for those periods. Also, periods (2) and (3) have the same digital output, despite the different analog sample levels.To reduce sampling noise we can sample more often, that is have thinner slices of time so that there are more analog samples to represent the same curve. To lower quantization noise we can have more digital levels; typically the number of levels is a power of 2, since we use binary coding.

For example, CD encoding used 44,100 samples per second per channel at 16 bits of resolution (allowing $2^{16}$ or 65,536 different levels); this was deemed enough for music since it allowed for an upper frequency limit of over 20 kHz (generally considered the limit of human hearing) and a dynamic range of 96dB (each bit adds 6dB; the choice of 96dB was widely panned by audiophiles as too small).*

As with everything in engineering (and in life, really) this was a matter of trade-offs. Later we've gone beyond these limits with other standards like SACD, for example. But the problem of trade-off remains, typically that of space or bandwidth against quality of reproduction.

Before compression, the total number of bits necessary to represent a stereo signal sampled at a rate of $s$ samples per second and a number of digital levels $2^{N}$ is $2 \, s \, N$ bits per second; because there's a lot of redundancy in music (no, it's not just Philip Glass), there are opportunities for compression.

Sometimes the music is compressed without losing information, called "lossless compression"; an example is FLAC, which has all the information necessary to reconstruct the original uncompressed digital music file. This is similar to compressing a data file for transmission; after decompression the reconstituted file must be identical to the original. (FLAC uses regularities of music to compress data more efficiently than a general compression algorithm.)

Sometimes the compression loses information that is deemed unnecessary, called "lossy compression"; MP3 compression is lossy. Lossy compression adds sampling and/or quantization noise to the original data, though the design of the compression scheme is supposed to minimize the aural effect of those additional errors in some trade-off with the compression ratio.

On the other hand, because plastic CDs and wireless signals sometimes get damaged, some space or bandwidth has to be used for error-correction codes and other digital administrative minutia. When the iPhone connects to the earbuds by wire, it can send an analog signal, but when it connects via Bluetooth, the signal is digital, must be compressed for transmission and requires a lot of network administration detritus.

So, one of the first questions wireless earbuds raise is: is Apple sending enough data over that Bluetooth connection for an audiophile? This isn't the only question, though.

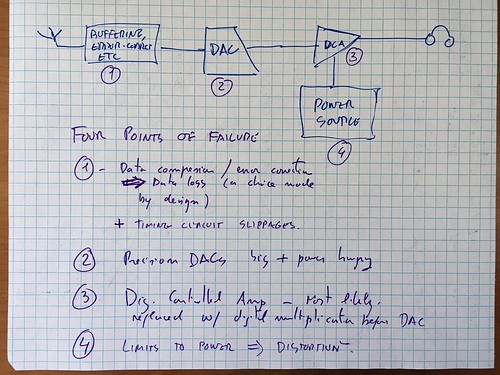

Using the very best in advanced engineering CAD displays, we can see that this is only the first of four classes of problems:

Problem class 1: Quality of the Data

Apple's decision to go wireless changes the transmission of data between the main processor and the digital-to-analog conversion from a wired connector inside the phone, and protected from most interference, to a digital transmission over a noisy channel (Bluetooth). That means that a lot of other things have to be transmitted, in particular handshaking data, error-correction codes, and diagnostic signals.

The problem is mostly that Apple went from being a perfectionist's personal fiefdom (during the reign of His Steveness, may his divine hand bless you with a bounty of new MacBookPros) to being a company looking to make a buck. And companies looking to make a buck make different trade-offs.

His Steveness wanted the best. He might not have gotten it always, but he made products for people who wanted to brag they had the best. (Even when by all objective measures they didn't.) But now, the whole company seems to be into the "milk our brand while it lasts" phase of its corporate life cycle, so I'd venture that their trade-offs are much closer to the general public's than those of the fringes.

His Steveness run the company targeting the fringes, so that the general [Apple-buying] public could

Not anymore. Not for Apple.

Taking a lossy compression like MP3 and compressing even further for the earbuds (possibly limiting both the frequency and the dynamic ranges) isn't a recipe for audiophile sound. It does work for phone calls, and that's probably what most phones are used for. But for music... no.

(At this point I should mention that digital audiophiles have moved on from Apple a while ago, putting up with

Problem class 2: Quality of Digital To Analog Conversion

A second source of problems is the digital-to-analog conversion circuitry. Among the many problems that can come from a cheap (and low-power, which is important in wireless earbuds) DAC, the most obvious are reproduction errors (the same digital input doesn't map to the same voltage consistently, or the difference between digital levels doesn't match to the appropriate difference in voltages). This isn't that much of a problem in 2016 (it used to be in the 1990s).

Another, more serious problem has to do with the precision of the timing, which is one of the major reason why if you care about computer music you'll get an external DAC, possibly a Chord Mojo or an Audioquest Dragonfly. (Or maybe something from the brand that can't be named.)

Even small errors in timing (some of which are induced by the buffering and data processing necessary to extract the digital music from the wireless signal) can lead to significant phase distortion, in that the 'time' used to reproduce the music doesn't match real time.

To illustrate this problem, consider the following phase-distorted sine wave (slightly exaggerated to make the case visible, but even very small phase distortions sound horrible):

Comparing the two periods of the distorted wave, T1 and T2, you can see that phase distortion in this case induces frequency variation. This means that instruments will sound as if they are out-of-tune, and [if you're over 30 you'll get this reference] like your brand-spanking-new iPhone is a cassette player running out of battery power.

If you accidentally downloaded a [poorly encoded] FLAC file from a torrent site you accidentally fell into while looking for a French Literature study group, accidentally run that FLAC file through a FLAC to MP3 converter that you accidentally had on your computer, then accidentally played it and noticed strange warbling and high pitch glitches, that's an entirely accidental observation of very bad phase distortion.

This is why any audiophile wants a DAC that uses its own timing circuitry and buffer, rather than depend on the shared circuitry involved in network management etc.

Problem class 3: Fixed- vs Variable-Gain Analog Amplification

Many computers (and I assume all iPods and iPhones) have a fixed gain amplifier for the reconstructed analog signal. That means that changes in volume are created by multiplying the digital signal by digital fractions prior to conversion to analog. In essence, removing data from the signal.

For example, to halve a digital number, all you need to do is shift all bits right, disposing of the lower-significance bit and adding a zero at the highest significant bit (or, depending on how negative numbers are encoded, adding a copy of the previously high bit). This means that one bit of data has been lost. The sound is not just half-volume, but also half-dynamic range; each halving of volume removes one bit or 6 dB of dynamic range:

$\texttt{[1000101001011011]} \rightarrow \texttt{[0100010100101101]} \rightarrow \texttt{[0010001010010110] }$

If the original dynamic range of the data was higher than that of humans (CD or CD-derived online purchase or stream? No, it wasn't!), then this loss isn't important. Otherwise (i.e. basically always), your music just became lower resolution.

In a better sound system (i.e. any external DAC/amp), the analog signal out of the DAC goes into an analog amplifier that has variable gain. In some systems the variable gain is controlled with a knob, in others using a digital interface. But in both cases the amplifier tends to be a digitally controlled variable gain amplifier, in which the analog signal path is all analog and only the gain is controlled by a digital system (typically a feedback network of switchable topology).

(An alternative approach is to take the, say, 16-bit data and shift it 8 bits up into the most significant bits of a 24-bit word, then multiply that by an 8-bit fraction (thus allowing for 256 different volume levels) and feed the result to a 24-bit DAC, whose result will feed a fixed-gain amplifier. This allows for the whole process to be digital as long as possible.)

The amplification issue alone is worth getting an external DAC; but it's important to also consider the next point.

(My Audioquest Dragonfly is usually plugged into a powered USB hub, so it doesn't rely on the computer USB bus power.)

Problem class 4: Power issues

And this is the big big one. You like loud music? Well, expect distortion as soon as the volume gets loud. Because most of these small batteries aren't able to deliver the current needed fast enough. So what happens is that as the output voltage increases by $\Delta v$, requiring a $\propto (\Delta v)^2$ increase in power, the amplifier "fixed" gain starts to decrease, more so the higher the $\Delta v$, and we get... well, we get this:

That compression of the sine wave makes it sound nasal. When your music sounds like that, it's a sign that your amplifier is not being able to draw enough power. Note that this is different from the clipping that happens if the transistors in the output stage enter the saturation regime; in that case, instead of a smooth scrunched sine wave, we get a flat-volume squared wave, which makes everything sound like a heavy metal guitar.**

Ever wonder why 100W audiophile amplifiers have external power supplies that look bigger than the 1000W power supply on a computer server? That's because they are. Abundant power is an essential part of clean amplification, and without clean amplification the rest doesn't matter. And the way you get abundant power is you have a lot of slack available.

Care to bet how much slack power those earbuds have?

Does it matter?

To whom?

To me, no. I have a number of other, better sources of music, and I use the iPhone as an internet device and, astonishingly, as a phone. Weird, I know.

To those who just want to listen to podcasts, audiobooks, maybe some music in noisy environments? Of course not.

To an audiophile, who for some unexplained reason doesn't get a cheap lossless player like the Fiio X5? Yes, it matters, but this audiophile has the option to get the new Audioquest Dragonfly RED, with a tail adapter for the iPhone, so that's what s/he should do. Pair that with a nice pair of big cans like the Sennheiser 650s (in my opinion the best quality/price cans on the market), and you're set.

To an audiosnob who can't tell the difference between 866kbps Apple lossless and 32kbps mono MP3 but insists on having "the very best," preferably Bang & Olufsen or some other design-heavy, sound quality-light, high-reconition brand? Yes, it will matter a lot. (Audiosnobs have already invaded Head-Fi and other audiophile forums arguing against the iPhone 7 from their usual position, ignorance.)

-- -- -- -- Footnotes -- -- -- --

* Yes, the Nyquist limit for 44.1 kHz sampling is 22.05 kHz... as long as the anti-aliasing filter is a perfect step function in the frequency domain. The universe containing exactly zero perfect step function anti-aliasing filters, I and the entire engineering profession prefer to hedge by saying that it's "over 20 kHz."

When audiophiles say that LPs (Long Play records, aka "vinyl," Olivia Wilde not included) have better sound than CDs, they are usually referring to dynamic range. It's not just that CDs have only 96dB of range, but much worse, that in transferring the music from the master recordings to CD, sound

(The standard example is the butchery of Dire Straits' "Money For Nothing," which was so compressed for the CD that it lost the whole point of the intro. Hey, though I listen almost exclusively to art music and jazz, nostalgia has its place.)

** That's because the sound effect that makes electric guitars sound like that is precisely pre-amping the sound so high that the output stage transistors will saturate and clip the waveform square, at the same time removing almost all volume envelope effects. You can do this to any instrument including voice.

Added later: yes, I know all these effects are digital now. Kids these days! In my day you built your effects with transistors, µA741s and sometimes NE555s. None of that "digitize, FFT, do whatever, convert out" nonsense. We had grit!