Many presenters who are hard workers don't care for working on their presentations. That's odd.

Many researchers, data scientists, academics, and other knowledge discoverers are not very good at presenting their work. They argue, somewhat reasonably, that their strength is in formulating questions, collecting and processing data, and interpreting the results. Presentations are an afterthought.

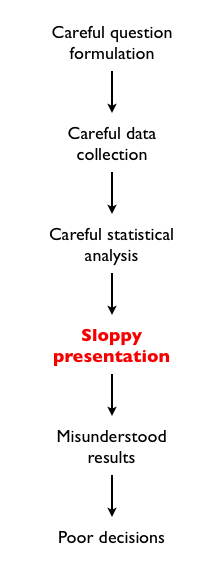

The problem with this is the following:

If the purpose of finding out a true fact is to influence decision-makers, communicating that fact clearly is an essential step of the whole process. In fact, all the work done prior to the presentation will be wasted if the message doesn't get across.

Does it make sense to waste months of work discovering knowledge because one isn't in the mood to spend a few hours crafting a presentation?

Non-work posts by Jose Camoes Silva; repurposed in May 2019 as a blog mostly about innumeracy and related matters, though not exclusively.

Thursday, June 30, 2011

Wednesday, June 29, 2011

The problem with "puzzle" interview questions: II - The why

Part I of my post against the puzzle interview is here.

There are two related "why?"s about puzzles in interviews: 1) Why do companies use puzzles as interview devices? 2) Why are puzzles inappropriate for that purpose now?

The last word answers the first question, really: because in the past puzzles were a reasonable indicator of intelligence, perseverance, interest in intellectual pursuits, and creativity. Since these are the characteristics that firms say they want workers to have, puzzles were, in the past, appropriate measurement tools.

Why in the past but not now, then?

In the past, before the puzzle-based interview was widely adopted, people likely to do well in one were those with a personal interest in puzzles. People who spent time solving puzzles instead of playing sports or socializing with members of the opposite sex -- nerds -- incurred social and personal costs. This required interest in intellectual pursuits and perseverance. Now that puzzles are used as interview tools, they are just something else to cram for and find shortcuts; that's the mark of those intellectually uninterested and lacking perseverance.

Furthermore, since they were solving puzzles for fun, nerds were actually solving them instead of attending seminars and buying books that teach the solutions and mnemonics to solve variations on those solutions (what people do now to prepare for the puzzle interview). Solving puzzles from a cold start requires intelligence and creativity; memorizing solutions and practicing variations requires only motivation.

In technical terms, the puzzles were a screening device that decreased in power over time as more and more people of the undesired type managed to get pooled with the desired type.

Every metric will be gamed, both direct measures and proxies. Knowing this, firms should focus on the direct metrics. They will be gamed, but at least effort put into gaming those may be useful to actual performance later.

Memorized sequences of integers from a puzzle-prep seminar will definitely not.

There are two related "why?"s about puzzles in interviews: 1) Why do companies use puzzles as interview devices? 2) Why are puzzles inappropriate for that purpose now?

The last word answers the first question, really: because in the past puzzles were a reasonable indicator of intelligence, perseverance, interest in intellectual pursuits, and creativity. Since these are the characteristics that firms say they want workers to have, puzzles were, in the past, appropriate measurement tools.

Why in the past but not now, then?

In the past, before the puzzle-based interview was widely adopted, people likely to do well in one were those with a personal interest in puzzles. People who spent time solving puzzles instead of playing sports or socializing with members of the opposite sex -- nerds -- incurred social and personal costs. This required interest in intellectual pursuits and perseverance. Now that puzzles are used as interview tools, they are just something else to cram for and find shortcuts; that's the mark of those intellectually uninterested and lacking perseverance.

Furthermore, since they were solving puzzles for fun, nerds were actually solving them instead of attending seminars and buying books that teach the solutions and mnemonics to solve variations on those solutions (what people do now to prepare for the puzzle interview). Solving puzzles from a cold start requires intelligence and creativity; memorizing solutions and practicing variations requires only motivation.

In technical terms, the puzzles were a screening device that decreased in power over time as more and more people of the undesired type managed to get pooled with the desired type.

Every metric will be gamed, both direct measures and proxies. Knowing this, firms should focus on the direct metrics. They will be gamed, but at least effort put into gaming those may be useful to actual performance later.

Memorized sequences of integers from a puzzle-prep seminar will definitely not.

Labels:

management,

Puzzles,

thinking

The problem with "puzzle" interview questions: I - The what

I like puzzles. I solve them for fun; I don't like when companies use them for recruiting, though.

Some companies use puzzle-like questions as interview devices for knowledge workers. Other than the obvious inefficiency of using proxies when there are direct measures of performance, many of these questions penalize creativity and thinking outside the box defined by the people who are conducting the interview (usually the potential coworkers).

Here's a thought: if hiring a programmer, ask a programming question. For example, give the interviewee a snippet of code and ask what its function is; ask how it could be optimized; ask what would happen with some change to the code or how a bug in a standard subroutine would affect the robustness of the code.

Here's a second though: if hiring statisticians, instead of trying to trip them with probability puzzles (especially when your answer might be wrong), show them a data-intensive paper and ask them to explain the results, or to consider alternative statistical techniques, or to point out limitations of the techniques used. Perhaps even -- oh what a novel idea -- consider asking them to help with an actual problem that you're actually trying to solve.

My job interviews, in academe, were like these thoughts: I was asked, reasonably enough, about my training, my research, my teaching, and to demonstrate the ability to present technical material and answer audience questions; job-related skills, all, even though some interviewers were interested in puzzles.

In social events, however, some acquaintances have asked me questions from interviews; here are a couple of responses one could give that are correct but unacceptable to most interviewers:

How would you move Mount Fuji?

Well, in a universe in which the Japanese people and government would allow me to play around with one of their most important landmarks, I'd probably be too busy simuldating Olivia Wilde and Milla Jovovich to dabble in minor construction projects. But if I had to, I'd use a location-to-location transport beam from my starship, the USS HedgeFund.

Or did you want to know whether I can come up with the formula for the volume of a truncated cone?

What is the next number in this sequence: 2, 3, 5, 7, 11,...

It's pi-cubed. You are enumerating in increasing order the zeros of the following polynomial

\[ (x - 2) (x - 3) (x - 5) (x- 7) (x - 11) ( x - \pi^3).\]

Or did you think that there was only one sequence starting with the first five prime numbers?

Bob has two children, one is a boy. What is the probability that the other is a boy?

I made a video about that. (Even after that video, or my live explanation, some people insist on the wrong answer, 1/3; proof that there are few things more damaging than a little knowledge matched with a big insecure ego.)

-- -- -- -- --

I'll have a later post explaining the deeper problem with using puzzles (and its dynamics), part II of this.

Some companies use puzzle-like questions as interview devices for knowledge workers. Other than the obvious inefficiency of using proxies when there are direct measures of performance, many of these questions penalize creativity and thinking outside the box defined by the people who are conducting the interview (usually the potential coworkers).

Here's a thought: if hiring a programmer, ask a programming question. For example, give the interviewee a snippet of code and ask what its function is; ask how it could be optimized; ask what would happen with some change to the code or how a bug in a standard subroutine would affect the robustness of the code.

Here's a second though: if hiring statisticians, instead of trying to trip them with probability puzzles (especially when your answer might be wrong), show them a data-intensive paper and ask them to explain the results, or to consider alternative statistical techniques, or to point out limitations of the techniques used. Perhaps even -- oh what a novel idea -- consider asking them to help with an actual problem that you're actually trying to solve.

My job interviews, in academe, were like these thoughts: I was asked, reasonably enough, about my training, my research, my teaching, and to demonstrate the ability to present technical material and answer audience questions; job-related skills, all, even though some interviewers were interested in puzzles.

In social events, however, some acquaintances have asked me questions from interviews; here are a couple of responses one could give that are correct but unacceptable to most interviewers:

How would you move Mount Fuji?

Well, in a universe in which the Japanese people and government would allow me to play around with one of their most important landmarks, I'd probably be too busy simuldating Olivia Wilde and Milla Jovovich to dabble in minor construction projects. But if I had to, I'd use a location-to-location transport beam from my starship, the USS HedgeFund.

Or did you want to know whether I can come up with the formula for the volume of a truncated cone?

What is the next number in this sequence: 2, 3, 5, 7, 11,...

It's pi-cubed. You are enumerating in increasing order the zeros of the following polynomial

\[ (x - 2) (x - 3) (x - 5) (x- 7) (x - 11) ( x - \pi^3).\]

Or did you think that there was only one sequence starting with the first five prime numbers?

Bob has two children, one is a boy. What is the probability that the other is a boy?

I made a video about that. (Even after that video, or my live explanation, some people insist on the wrong answer, 1/3; proof that there are few things more damaging than a little knowledge matched with a big insecure ego.)

-- -- -- -- --

I'll have a later post explaining the deeper problem with using puzzles (and its dynamics), part II of this.

Labels:

interviewing,

management,

Puzzles,

thinking

Subscribe to:

Posts (Atom)